“The Ethics of Zero-Day Exploits: The NSA Meets the Trolley Car”

Communications of the ACM, January 2021, Vol. 64 No. 1, Pages 97-103

Review Articles

By Stephen B. Wicker

“This article [takes] two basic approaches to evaluating the ethics of stockpiling zero-day exploits.”

The May 2017 WannaCry ransomware attack caused a great deal of damage across Europe and Asia, wreaking particular havoc with Britain’s National Health Service. The attack exploited a Microsoft Windows vulnerability that had been discovered and exploited by the U.S. National Security Agency. The NSA informed Microsoft of the vulnerability, but only after the NSA had lost control of the assets it had developed to take advantage of the vulnerability. Shortly after the attack Microsoft President and Chief Legal Officer Brad Smith characterized the NSA and CIA’s stockpiling of vulnerabilities as a growing problem:

Finally, this attack provides yet another example of why the stockpiling of vulnerabilities by governments is such a problem. This is an emerging pattern in 2017. We have seen vulnerabilities stored by the CIA show up on WikiLeaks, and now this vulnerability stolen from the NSA has affected customers around the world. Repeatedly, exploits in the hands of governments have leaked into the public domain and caused widespread damage.

Smith asserted that stockpiling of vulnerabilities, as opposed to immediately informing the software vendor, was wrong, in part because of its effects on Microsoft’s customers. A national security operative might argue, however, that these same customers enjoyed a greater benefit through increased personal safety. As an example, this operative might point to the Stuxnet worm. Stuxnet took advantage of four Microsoft Windows vulnerabilities to attack a set of centrifuges that were critical to Iran’s nuclear program. This highly sophisticated attack, created and delivered by agents of the U.S. and Israeli governments, may have saved the lives of potential targets of the Iranian nuclear program.

An ethical dilemma presents itself: Are U.S. government employees behaving ethically when they stockpile software vulnerabilities? To address this question, I begin by reviewing the nature of these vulnerabilities and the resulting “zero-day” exploits. I then consider whether participation in stockpiling is permissible from an ethical standpoint. This is a difficult problem, as the standard consequentialist arguments on which current policy is based are crippled from the outset by their need to cope with a great deal of uncertainty. Other complications include the alleged inability of decision makers to share the bases for their decisions with the general public, as well as a form of regulatory capture—those in a position to perform the ethical calculus are the same ones who will exploit the vulnerabilities. I argue these issues can be avoided by using a non-consequentialist approach. By creating detailed case studies for the ethical issues in play, computer scientists can develop a technically informed ethical intuition, and be in a better position to assist with policy moving forward.

Bugs, Vulnerabilities, and Exploits

Bugs have plagued computers and computer software since the six- and eight-legged varieties found their way into the electromechanical switches of UNIVAC. The problem continues today in the form of coding errors that lead to unexpected behavior on the part of computer software. Delivered code has been estimated to average from 15 to 50 errors per 1,000 lines across the industry. Through “cleanroom” techniques the number can be brought close to zero, but this is expensive, time-consuming, and usually limited to highly specialized and strictly compartmentalized government projects such as the space shuttle.

Bugs manifest themselves in a wide variety of forms, from the occasional crash to more subtle though potentially more dangerous behavior. Bugs rise to the level of vulnerabilities when they allow third parties to use the software in a manner that the scientist/engineer who wrote the code did not intend. For example, some vulnerabilities may allow a third-party to see information for which he or she is not authorized, while the worst allow a hacker to load and run malware on the machine on which the vulnerabilities reside.9 If the software vendor is unaware of a vulnerability in its product, the term “zero-day vulnerability” applies. “Zero-day” refers to the number of days the vendor has been aware of the vulnerability (zero), and thus the ongoing susceptibility of the software to ongoing attacks.

A “zero-day exploit” is an attack that takes advantage of a zero-day vulnerability to compromise data on a target machine or to deliver and run malicious code on that machine. Zero-day exploits generally have two parts: the exploit code that gains access to a machine through a vulnerability, and an often-unrelated payload that is delivered to the machine once the exploit has gained access.

Vulnerabilities in software are found through many means, but most techniques fall under three general headings: white box, gray box, and black box. The white box approach assumes complete access to source code, design specifications, and in some cases the programmers themselves. The black box approach takes the opposite extreme, assuming no knowledge of the internal structure of the software. As one might imagine, gray box attacks fall somewhere in between. In many cases, gray box attacks begin as black box attacks, but become increasingly gray as knowledge of the behavior of the target allows for refinement of the attack.

… [There’s lots of detail, read the full article to find out.]

Conclusion and Further Thoughts

In this article we have taken two basic approaches to evaluating the ethics of stockpiling zero-day exploits. I have argued that the consequentialist approach has significant difficulties, primarily due to problematic underlying assumptions and a need to balance objectives that are probabilistic, or even incommensurable.

The non-consequentialist approach, on the other hand, offers more traction, capturing our ethical intuition regarding the public risk that many think is inherent in zero-day exploits in particular and cyberwarfare in general. Public policy that attempts to educate the public about stockpiling while reducing general risk will find more favor with the public. Perhaps of equal importance, those who are involved with the stockpiling and the development of exploits will have greater cause to feel they are both defending their country and engaging in demonstrably ethical activity.

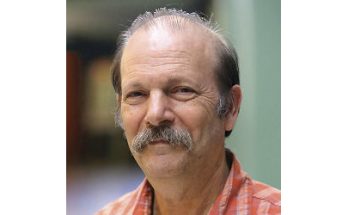

About the Author:

Stephen B. Wicker is a professor of electrical and computer engineering at Cornell University, Ithaca, NY, USA.

See also:

- Tools and Weapons: The Promise and the Peril of the Digital Age by Brad Smith and Carol Ann Browne.